Project Aria is Meta's research platform for egocentric AI - a sensor-rich wearable device designed to capture first-person, multi-modal data at scale. LiveMaps builds on this data to create persistent, shared spatial representations of the world. As Design Lead for Contextual AI, I'm using Aria glasses to define how AI systems understand and respond to a user's physical environment, activities, and intentions in real time.

About the project

Led research design for Project Aria and LiveMaps, defining interaction paradigms for egocentric machine perception and multi-modal AI systems. Worked across hardware, sensing, spatial computing, and machine perception teams to establish real-world data foundations for AI and Robotics.

Co-authored "Project Aria: A new tool for egocentric multi-modal AI research" (arXiv:2308.13561).

Contextual AI

Defining adaptive, personalized systems and interaction models for AI-driven assistance in the physical world. The work explores how AI can understand and respond to a user's environment, activities, and intentions in real time.

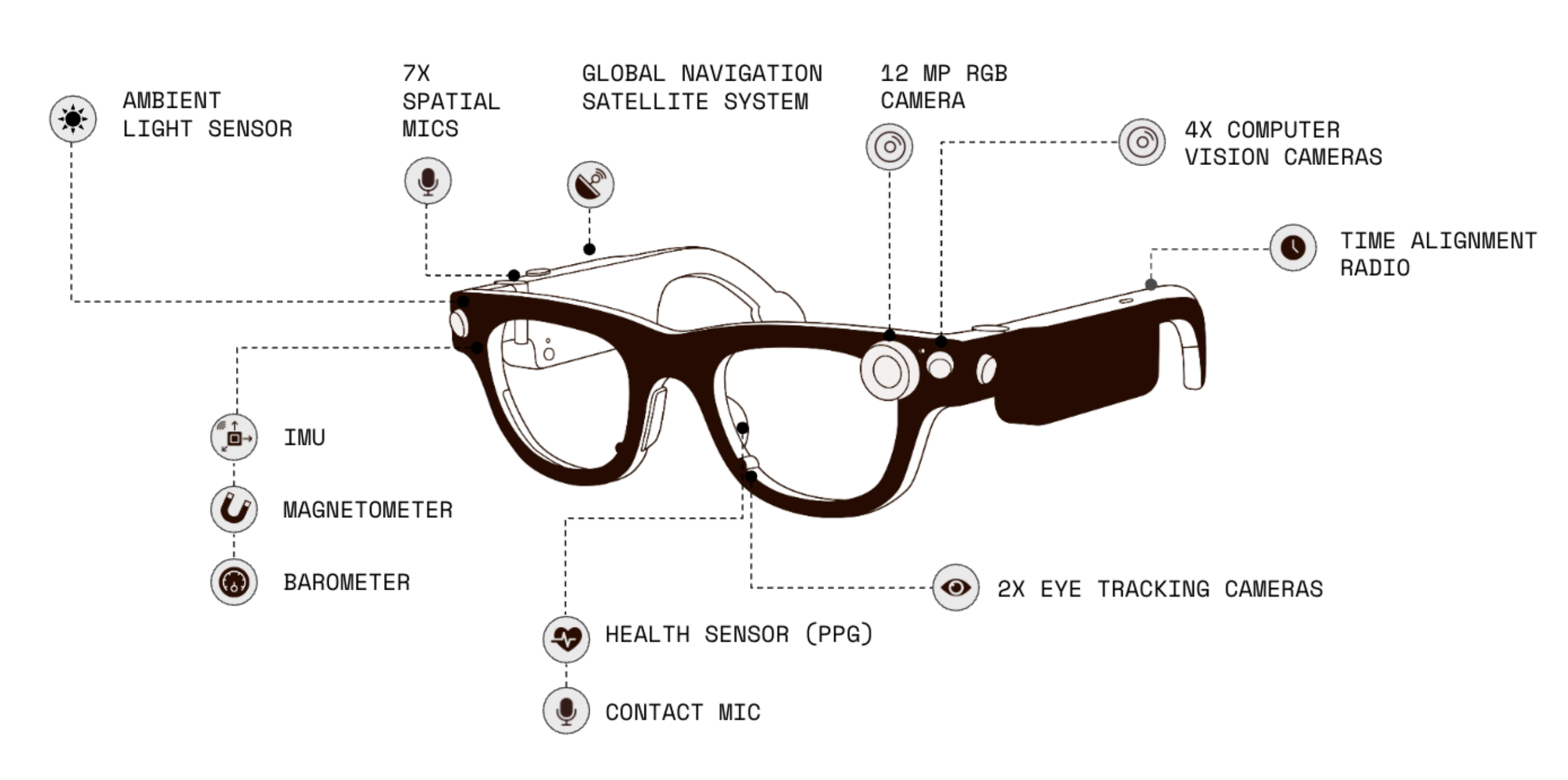

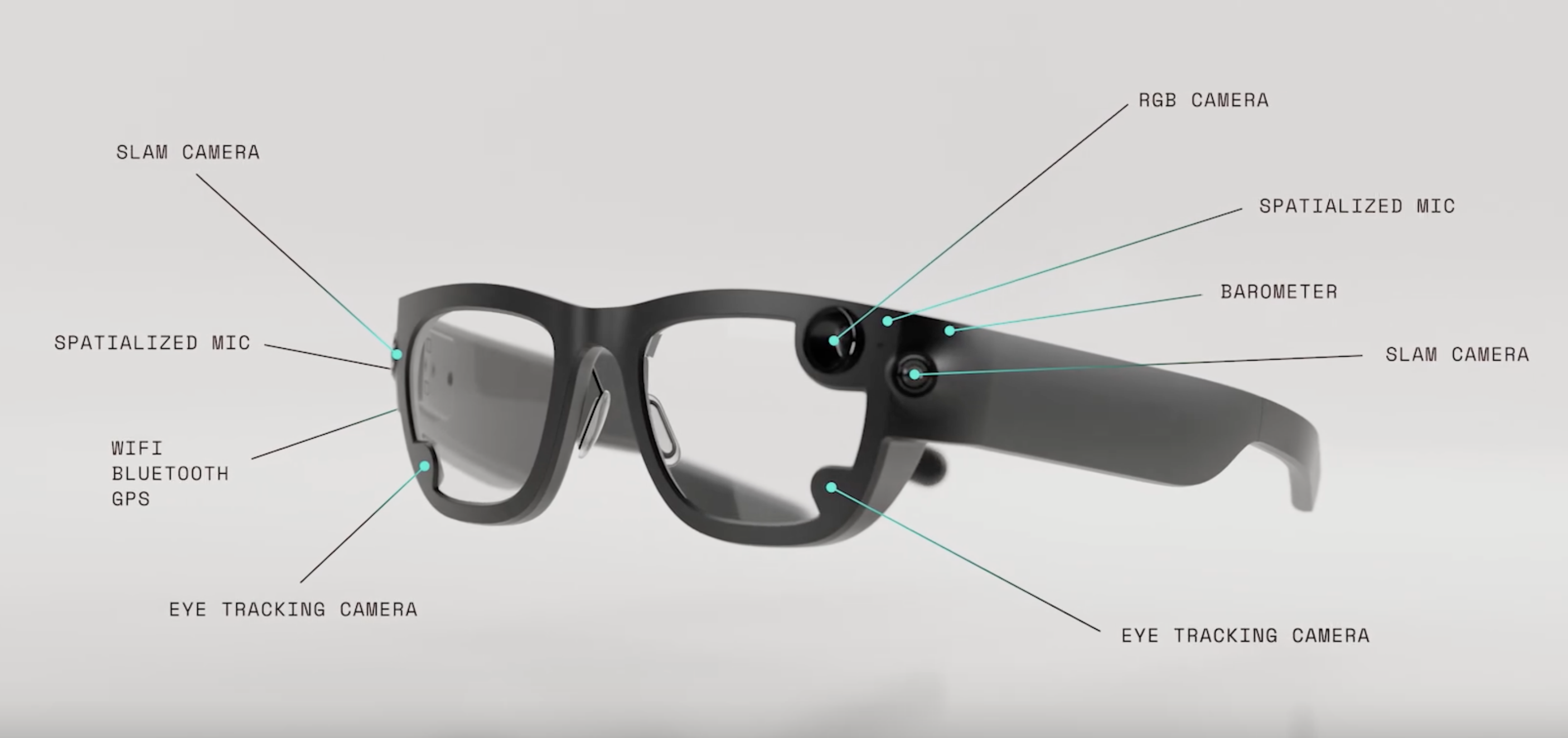

The Glasses

Project Aria glasses are sensor-rich wearable devices equipped with RGB cameras, SLAM cameras, eye tracking, spatialized microphones, barometer, and connectivity modules. Since its debut in 2020, the platform has enabled researchers worldwide to advance machine perception and AI.

Videos

Resources

- Project Aria - Official website and research tools

- arXiv: Project Aria - "A new tool for egocentric multi-modal AI research"

Detailed case study materials are subject to NDA

In case you're interested, ask me for a quick walkthrough.